Reading about interference cancellation techniques today, I recalled an interesting article by Sridhar Vembu titled Two Philosophies in CDMA: A Stroll Down Memory Lane. Vembu is the founder and CEO of Zoho Corporation, a venture which has turned him into a billionaire. He spent time both in academia (at Princeton) and in industry (at Qualcomm) working with the likes of Sergio Verdu in one camp and Andrew Viterbi in the other. Here are some excerpts from his article which is not available online anymore at the time of this writing.

I have now worked a little over 10 years in the industry, after getting my PhD. In my very first year of work at Qualcomm, I noticed how even when speaking about the same subject, namely CDMA, academia and industry were on totally different planets. A very quick summary of the conclusions we reached 10 years ago:

1. Multi-user interference is a major problem in CDMA. Your first line of defence against it is a powerful error-correcting code. You can potentially supplement it with non-linear techniques like successive decoding and successive interference cancellation, but they suffer from parameter estimation errors, so are not [as of 1995] yet anywhere close to being practical. Instead of non-linear techniques, our focus in the paper was on linear techniques common in academia.

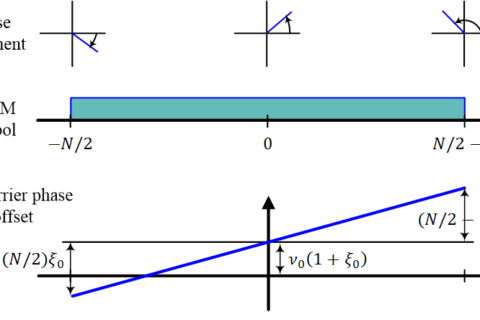

2. A second line of defence had been very common in the literature, indeed the exclusive focus of study in the academic literature [true in 1995], namely linear dimensional techniques. Many people superficially claimed these techniques were applicable to commercial Qualcomm CDMA systems then already in the market. They were not. The fundamental system model the academic community used to study their interference suppression techniques differs markedly from Qualcomm CDMA. The systems were philosophically different, and the academic CDMA model was clearly inferior from an engineering point of view.

Here are some more interesting words.

We called the academic CDMA D-CDMA (for “dimensional CDMA”, and you can also consider it “delusional CDMA” – just kidding!) and the industry CDMA as R-CDMA (for “random CDMA” or “real CDMA” if you will). D-CDMA system model assigns signature waveforms (dimensions) to users, and deals with their correlations at the receiver using a variety of linear and non-linear algorithms . If the signature waveforms are orthogonal (the ideal situation), then D-CDMA is theoretically equivalent to TDMA. D-CDMA system model simply did not assign any role to error-correcting codes, a significant omission.

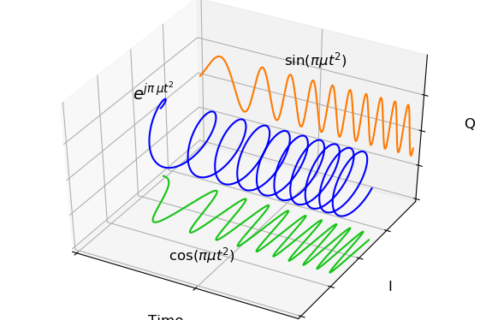

In contrast to D-CDMA, R-CDMA is a deceptively simple, even simplistic idea: simply randomize all the users with very long spreading codes, so that every other user looks like Gaussian noise, then use powerful error-correcting codes against that noise + noise-like interference.

In any practical (or theoretical!) communication receiver, statistical detection (matched filtering and other statistical signal processing like parameter estimation) and decoding work together. Any system model and analysis technique that does not consider the system holistically is prone to reach erroneous or at the very least, misleading conclusions. Yet, the vast D-CDMA academic literature was full of such statistical-detection-based analysis (they call it “demodulation”, to separate it from “decoding”). A telling sign is the emphasis on SNR in the D-CDMA literature, a notion useful in analog parameter estimation, rather than the more appropriate Eb/No, a notion that is only relevant to digital communication. In fact, D-CDMA literature, including almost every paper from my advisor, made the cardinal sin of evaluating digital communications systems based on the uncoded bit error rate.

Thereafter, he explores the reasons behind such an impractical attitude towards research.

The reason for the love affair with statistical detection, to the exclusion of decoding, is the ivy-league academic quest for “closed form” mathematical results, at the expense of any kind of real world relevance. If your PhD doesn’t have the requisite number of Lemmas and Theorems, forget it – that seems to be the attitude. Turbo codes left leading coding theorists in the dust, with a radically simple and practical approach, which essentially achieves Shannon capacity (and that was only “proved” through simulations, no theorems, leading to enormous scepticism about the work initially). My advisor, a closet mathematician, always loved the closed form, theorem proof approach himself, and essentially prohibited me from doing any kind of “simulation work” to get a PhD. To be perfectly fair to him, that fit very well (too well!) with my own IIT Brahmin attitude about not getting hands dirty with something as mundane as writing code, an attitude I got out of after I had an epiphany that my entire PhD was useless abstractions piled on top of useless abstractions. That was what led me to abrubtly end my academic career. Looking back after 10 years, that was the single best career decision I made in my life.

When I entered Qualcomm I realized what a valuable tool the computer is to study real world systems, and how limiting the quest for “closed form” can be. In fact, my introduction to the world of software arose from modeling and simulating communication receivers, and I ended up embracing software as a career eventually. I read somewhere that Wolfram quit academia and went into software for what sounded to me (may be I imagined it!) like similar reasons: the Wolfram thesis asserts that the universe is equivalent to a Turing Machine and computer programs are the best tools to study the universe. Wolfram seems to deemphasize the theorem-proof methodology himself – in fact, he asserts in his book that most interesting questions are logically undecidable (i.e unprovable). I agree.

This is not to criticize mathematics; indeed far from it. Simple analytically tractable models (i.e the closed form again!) are illuminating, but they cannot substitute for more realistic models that can so often only be simulated. In particular, the holistic demodulation/decoding system cannot really be handled in any closed form solution I know of. Often, simulation can and does lead to insights into theory and can open theoretical doors that we may not suspect existed.

The reason I am writing this is to set the record straight. I have long ago moved on, and have transferred my affections to software. CDMA is like a long-lost old flame for me – it still excites some passion, but I am happily married to software now 😉

Today we have the benefit of the hindsight. Looking back at the history of wireless communication techniques applied to cellular systems, I tend to agree with every idea Vembu presented in his article. Clearly, academia had much to learn from industry. I think they did pretty well on the path to 4G and 5G cellular systems where the research gap between the industry and academia was definitely narrower as compared to 3G systems.

Having said that, sometimes I wonder how could leading communication practitioners in Qualcomm and other companies (as well as those in the academia) even agree to standardize an inferior technology like CDMA over a more powerful rival like OFDM.

- It was clear in the early days of digital cellular systems that this network had much more to offer to a mobile user than simple voice.

- Historically, the key player behind higher data rates has been acquisition of more spectrum.

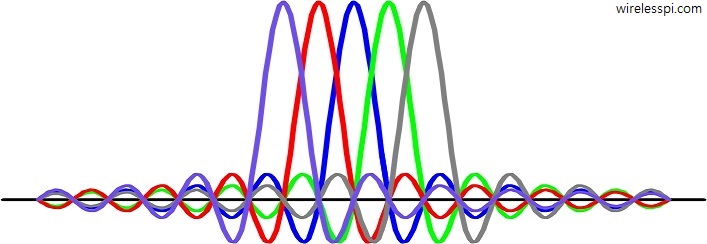

- In a CDMA system, several users sharing the same bandwidth at the same time communicate through significantly spreading the signal spectrum.

- Looking from this perspective, CDMA was heading towards a natural ceiling that limited the system from offering higher data rates.

The basic question is very simple. Why did those smart engineers and entrepreneurs fail to realize that CDMA will soon hit a spectrum wall whereas a flexible scheme like OFDM suited better towards the pursuit of higher spectral efficiency?