In an introduction to signals, we discussed the idea that the any activities around us, starting from subatomic particles to massive societal networks, are generating signals all the time. Since mathematics is the language of the universe and digital signals are nothing but quantized number sequences, it is fair to say that the workings of the universe can be mapped to an infinitely large set of signals. With these number sequences in hand, an electronic computer can process the signals and either extract the information about the surrounding real world phenomena or even better influence its target environment.

We saw a simple example of this shift in the comparison between a correlator and a matched filter. After the great advancement in computational power, most of the analog circuits capable of performing analog signal processing (e.g., by using resistors, capacitors, inductors, op amps and so on — basically physics and devices) have been replaced by powerful digital processors that can perform the necessary number crunching (basically algorithms run by computer programs) at a much better price vs performance point. This is hardly surprising since audio signals were being digitally processed much before the term Software Defined Radio (SDR) was coined by J. Mitola. Looking back, digital processing of radio signals now seems a straightforward step from there.

As building a Software Defined Radio (SDR) increasingly relies on the Digital Signal Processing (DSP) techniques, one wonders why the field of DSP is so versatile as compared to analog signal processing. The answer is in the storage of signals. Since most processes executed by nature are real-time, storage enables us to break through the limitations of these natural systems and enter into completely new realms. This is supported by plenty of examples in the history.

- Tens of thousands of years ago, the storage of sounds and images in our brains kickstarted the process that lead to the invention of language. Our thoughts became able to escape our minds and get stored in another person’s mind.

- The advent of agriculture around 10000 BC actually enabled us to store food and grain which opened the opportunity (for some) to pursue interests unrelated to gathering or hunting for food leading to rapid advancement of civilization.

- The first system of writing was invented around 3000 BC by the ancient Sumerians of Mesopotamia. This storage broke the data processing limits of the human brain and also made it possible for us to convey our thoughts in both space (with far-flung areas) and time (with future generations). Probably this is why Newton said: "If I have seen further, it is by standing on the shoulders of giants."

In general, a paradigm shift occurs whenever a new kind of storage arises. Thus, unlike the analog domain, unique techniques can be exploited in DSP due to the storage of samples in the processor memory. Further examples are also available in communication theory. Viterbi for instance, in his famous 1991 paper [1], summarized the essence of Shannon theory for digital communications in the form of three lessons. The first lesson was the following.

"Never discard information prematurely that may be useful in making a decision until after all decisions related to that information have been completed."

The applications of this lesson lead to soft decision decoding in convolutional codes and maximum-likelihood sequence estimation in high-quality wireline modems. The inventors of Turbo Codes also exploited the storage of log-likelihood ratios for just a little extra time (according to the number of iterations) that revolutionized the decoding methodology of error control codes forever.

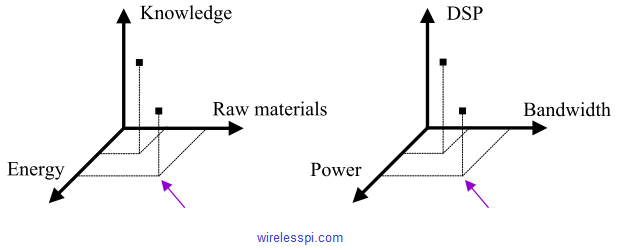

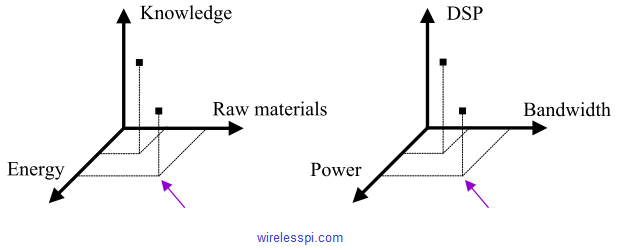

Expanding on this theme, storage alone is not enough. We must accumulate the knowledge of how to exploit the stored signals in an efficient manner. Since the early days of progress, humans always had three resources available: raw materials, energy and knowledge. The traditional focus was on increasing the raw materials and energy resources while gaining more knowledge was the lowest priority. For example, the world’s coal is estimated to run out before the middle of next century. By burning billions of tonnes of coal every year, we are emptying the reserves all over the world. On the other hand, we can discover more efficient methods of producing energy from a renewable resource like sunlight or wind and this knowledge will fulfill our needs for generations to come.

In a similar manner, the tradeoffs traditionally available to a communication systems designer were bandwidth and power. With the rise of DSP, cost-effective implementation of algorithms has become possible adding a third dimension to our arsenal with the following equivalents.

- Bandwidth $\leftrightarrow$ raw material

- Power $\leftrightarrow$ energy

- DSP $\leftrightarrow$ knowledge

This is shown in figure below where DSP refers to the computational complexity of the algorithms. Here, more DSP knowledge can be traded-off with less bandwidth and/or power [2].

The advent of DSP has revolutionized wireless communications and enabled us to pack and transmit more information within the same bandwidth than the early wireless pioneers could ever hope for. Some of the most straightforward examples are as below.

- Audio and video compression eliminate statistical redundancy in the stored bitstream which results in less bandwidth required for the same information.

- On the other end, redundancy can be smartly introduced in the bitstream that allows the decoding algorithms at the Rx to pull the intended message out of noise which results in less energy (SNR) required for data transmission at the expense of bandwidth (Gottfried Ungerboeck invented Trellis Coded Modulation (TCM) in 1970s that combines modulation and coding to improve the error performance within the same bandwidth).

- Signal equalization at high data rates can be simplified through transforming the problem via OFDM.

- Thanks to DSP algorithms, Multiple Input Multiple Output (MIMO) systems can employ several independent spatial streams (according to the number of Tx and Rx antennas) over the same channel at the same time within the same bandwidth.

- Full-duplex wireless communication builds on the knowledge from equalization concepts in which radios can now transmit and receive at the same time through applying smart self-interference cancellation techniques.

- See how the knowledge of DSP an be used to build exciting technologies like LoRa PHY, a standard for low-power long-range communication.

The current research is focused on applying machine learning techniques to wireless communications. The radio waves are not much different than the sound waves. As predicted by some, if the devices do become intelligent at some point in the future, transmitters and receivers along with the antennas give them the opportunity to talk among themselves, just like we use a vocal box along with vocal cords to transmit our voice and ears to receive the generated vibrations. Since digital communication is all mathematics at its heart and no one is better at computations, there is a possibility that we will have entirely new ways of communicating through a wireless channel devised by the machines themselves and unbeknownst to us.

References

[1] A. Viterbi. Wireless digital communication: A view based on three lessons learned, IEEE Communications Magazine, 29(9), 1991.

[2] H. Meyr, M. Moeneclaey, and S. Fechtel. Digital Communication Receivers, Volume 2: Synchronization, Channel Estimation, and Signal Processing, Wiley, 1998.